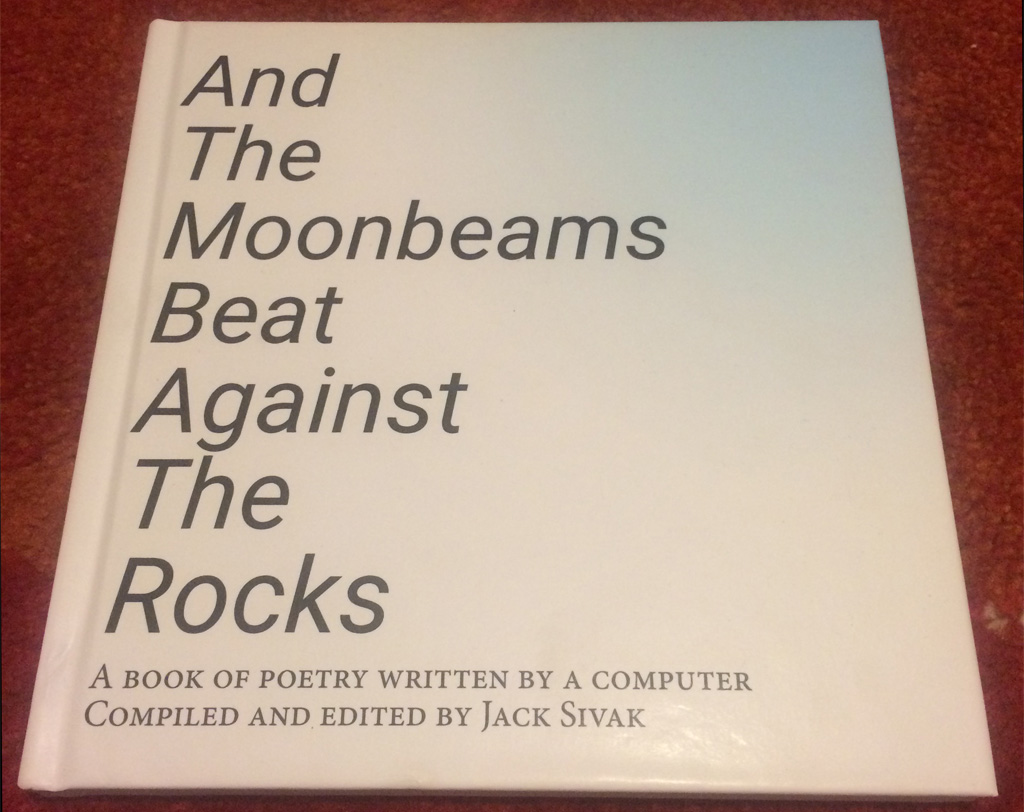

ML-Generated Poetry

I created a book of poetry using machine learning techniques. It includes 100 computer-generated poems and accompanying images, and I had it printed in physical form. It was quite a journey getting here, and shed some light on the state of machine learning tools and techniques available to those who wish to dabble. Let me walk you through the process.

So I heard about this cool tool called GPT-2. It can generate text that is human-like and could fool you if you weren’t expecting a coherent multi-paragraph story. There’s another tool from a couple years ago that I’ve also wanted to tinker with called Neural Style. It mashes up images in interesting ways - originally used to impose an artist’s particular style onto an existing graphic. Ok, so text with acoompanying images is the general goal, but it needs to be more specific

Since GPT-2 isn’t a great coherent storyteller, if I want text that is truly 100% computer-generated I can’t generate traditional stories. Poetry seems like a good choice - it can be vague, there don’t need to be too many consistent specific, and any oddities could just be passed off as a metaphor. Generating poetry is already quite popular in Natural Language Processing though, is there something I could do differently? How about mimicking poems already translated to English? That way they’ll already be a little ‘off’ so more quirks of the generated text might be able to slip through. Off to Project Gutenberg to get some translated poetry (I ended up going with Chinese poetry).

With the text corpus, it’s just a matter of cleaning out some duplicate poems, then the repeat process of generating some text, checking the output’s quality, and tweaking the parameters before generating more text. Since this is machine learning, a GPU would be handy. Luckily, Google offers free GPU time in Google Colab. Even better, they offer free credits to Google Cloud, which has a whole slew of beefy GPUs to choose from. After generating hundreds of poems and generating thousands of lines of unsuitable text I had a solid base of poems along with the ability to generate as many more as I desired.

Gathering the image data was a more manual process. Looking at each poem, I had to decide what sort of pictures might go with it. Sites like Unsplash, Pixabay, Pexels, and a bunch of others came in handy offering high-resolution images of whatever was needed with a favorable license. Since the image generating took a “style” and imposed it onto a target image, I also needed style images, which came from the same sources, but were more abstract in nature. As with the text, many rounds of iterations followed - paring up input images to see what worked and what didn’t with particular parameters. Hundreds of images later, a solid selection of candidates had formed.

Armed with the poems and accompanying images, it was just a matter of learning the industry-standard Adobe InDesign (which I can only give a lukewarm review of) and creating the book itself. Getting it printed was another challenge, because you usually need to order 100 copies or more to get a reasonable price-per-book for a quality hardcover with 200+ pages. However, the website bookbaby.com allows you to order a single copy as a sample for a modest sum, and it turned out great!

Reflecting back on the process and output, I would use a bigger corpus the next time - many themes were repeated (Autumn was apparently a particularly popular season in the corpus) and the mood erred on the side of depression. I’m curious how adding more content, or even poetry translated from other languages would affect the recurring themes, mood and language. I’ve also noticed more incoherence on the longer poems, which is unsurprising given GPT-2’s limitations (and limitations in NLP in general at the time). However, longer poems still seem to have sections that feel “deep”, or are strikingly coherent within themselves. I’m curious what an updated version of the GPT algorithm might be capable of.

If your interest has been piqued, you can download an EPUB of the book here or the MOBI version here.